I stumbled across this presentation by Dave Hahn, senior SRE at Netflix. Dave provides an entertaining keynote speech on how DevOps works at Netflix. I highly recommend watching it, it’s about 20 minutes long. Though for those of you short on time I’m going to touch on some of my key takeaways in the post below.

Spoiler Alert! This post is not about Dev Ops. What this is about, is the application of Lean principles.

Throughout the presentation, Dave outlines what they ‘don’t do‘ at Netflix and what they ‘do focus on‘. It just so happens that this has resulted in the behaviours and benefits outlined in DevOps. What is striking to me, is that this talk provides great examples of the actual application of Lean principles within managment decision making and touches on some of the intended and unintended benefits. This includes, levels of innovation, focus on the customer as well as identifying what differentiates them as a service. So how does this all work?

Lean thinking at Netflix

Firstly, it is apparent that Netflix has a clear mission statement ‘to win those moments of truth‘. This is their north star from which they validate how they operate, hire, promote the desired managment behaviours as well as determine how and where they should invest their resources. It would appear that they apply a laser-like focus to ensure that all their resources are focused on achieving this.

An example of this includes building a purpose built CDM platform. Netflix used to buy it as a service but realised that this was an expensive overhead to which the vendor’s value drivers and overarching strategy was not aligned to theirs. Hence, they built their own. This enabled them to offer ISP’s free memory caches, deployed within the ISP network, reducing the ISP’s overhead on transit costs but more importantly ensuring that ‘more Netflix is nearer to the point of consumption’, ensuring that you and I have a greater customer experience.

Netflix are a data driven company – data is at the core of their decision making process, from identifying what shows to produce, selecting the ideal director to realise the intended vision along with what actors are most likely to capture the audiences attention. Though data is one of their core assets, they have realised that managing everything within the data ecosystem isn’t a valuable use of their resources. Hence, all of their 100s of microservices have been on the cloud since 2016. This is a clear example of identifying elements of the value chain which do not provide a clear relatable benefit, data centres in this instance, to the customer but are a necessity, something which Netflix classes as ‘undifferentiated heavy lifting’ and upon identifying it, outsourcing it.

Their first priority is keeping engineers doing fun, exciting, challenging stuff so that they maintain, if not increase, their ‘velocity of innovation‘. This means that they actively look to remove bureaucracy, as employing someone to dream up all the relevant guardrails whilst trying to promote a fast paced Agile organisation, is an oxymoron. Instead, they empower employees to both make and take responsibility for their decisions – for example all their engineers have access to the production environment. For anybody who is not technical, this is like an airline allowing all technical staff to make changes to the engines, whilst cruising at 40,000 feet.

To ensure that our jet doesn’t stall whilst mid air, Netflix has established a set of tools which automate testing and quality checks prior to pushing new features into production. This results in you and I enjoying the latest features to select, preview and help us decide what programme to watch next. Though by building these support tools to empower decision making, they’ve removed bureaucracy associated with release windows and the associated overhead of training engineers on new programming languages and toolsets, safeguarding against any potential errors or bugs. All of which would be time consuming and ultimately slowing the rate of innovation.

Observations

This, I assume, has evolved over time and reminds me of the adaptive organisation approach outlined in Eric Reis’s book, THE LEAN STARTUP. This involves applying the 5 why’s techniques, when an issue or fault has occurred, to identify the root cause and then dependent on the cost associated with the fault, proportionally invest in a fix. In Netflix’s case, this has resulted in an array of open source tools which makes employees jobs easier to launch their latest ideas, whilst mitigating the risk of bringing down the whole platform.

This does not mean that Netflix is a nirvana to work at. By their own admission they spend a considerable amount of time ensuring ‘cultural fit’ of applicants when hiring. Hence, you don’t just have to be one of the best or the brightest in your field, you must also be aligned with Netflix’s core values.

Yes, their service has experienced downtime, as is well documented on Twitter, but this has not been caused by disgruntled employees and due to tools like chaos monkey, when it does occur, they react quickly.

This calculated risk, is one that their customers are more than willing to overlook. I would say ‘forgive’, but this implies that the customer consciously recognises that they feel that they have been wronged and hence, emotionally have to engage in a process of forgiveness. As such, I would argue that the quality of the service and the content that they now themselves produce, meets their customers primary needs and desires. Affording them the ability to implicitly circumvent this emotion – no easy feat!

So what’s the takeaway?

A clear vision and mission statement, shackled to a management culture which is obsessive about the customer experience appears to be a consistent theme across the FAANG behemoths. Whilst drafting this post, I stumbled across the blog of Gergely Orosz who is an ex-Uber employee. He too provides similar insights in his article What Silicon Valley “Gets” about Software Engineers that Traditional Companies Do Not‘. This article highlights a number of parallels; empowering intelligent people, removing bureaucracy, encouraging transparency of both projects and that of the business’ performance.

I haven’t worked at a FAANG organisation, so like you, I am decoding what is shared in the public domain. And like all companies and anyone with a social media account, they will be guilty of promoting their idealised selves.

All organisations have failings and some of their priorities and values will not transcend every industry. For example, a healthcare service provider which prioritised velocity of innovation over everything else, would be incongruent with the Hippocratic oath. I’m sure that this hypothetical organisaiton would produce some interesting but clearly ethically questionable results.

I have worked now in a number of organisations, all of which have had different cultures and have been hell bent on finding the illusive ‘secret sauce’ to continually increase market share, revenue, growth and or improve margin. Unsurprisingly, there is a tendency to attempt to mimic core capabilities of a market leader in a separate sector.

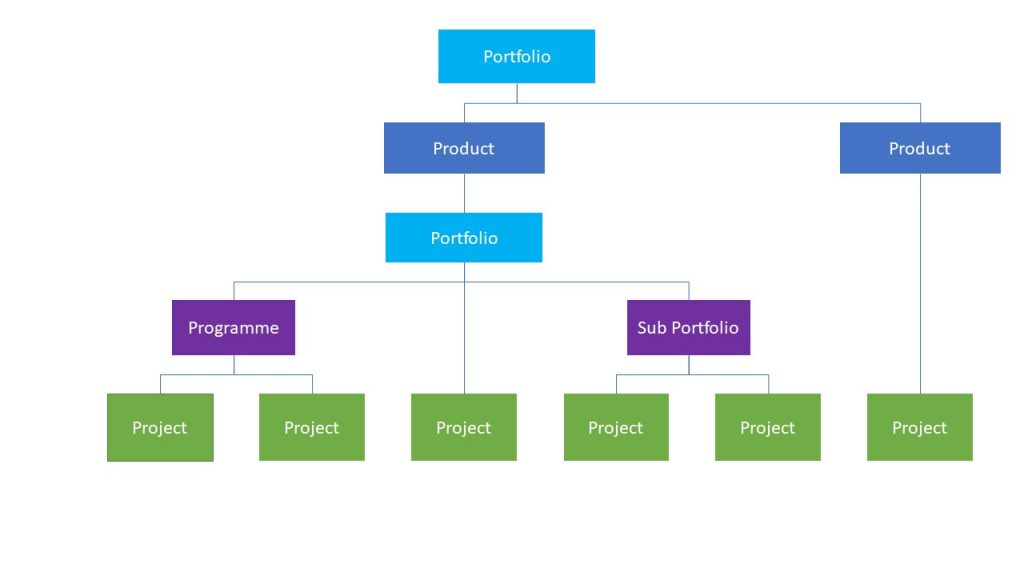

A recent example, within the Project Managment community, are job postings requesting candidates have experience of Agile at scale akin to the Spotify Model. This bemuses me as, firstly, there is likely to be a small talent pool which actually has this experience and secondly, because it’s quite unlikely that those who do, will be wiling to work for an organisation which is trying to mimic this capabillity. Especially if they do not already demonstrate the necessary cultural foundations in order to make this a success.

That is not to say that we cannot learn from our peers in other domains. But when doing so we need to be aware of what trade offs they have made in order to achieve success along with their value streams and overall how are they configured. Subsequently, we need to be aware of the root cause of a desired capabillity, in this case it is a managment culture which either by design or chance champions Lean principles and just so happens to result in a great DevOps capabillity.

Final thoughts…

As a result of writing this, it has got me thinking about what I currently do on a regular basis and how I can apply Lean thinking to my daily practice.

I am guilty, maybe you are too, of having a varied and wide array of interests, which subsequently can cause me to become distracted, or I expel effort on ‘undifferentiated heavy lifting’ when trying to solve problems. I actually feel that in a world where there is an abundance of tools delivered via the cloud, enabling entrepreneurs and tinkerers to explore an abundance of possibilities as alluded to by Chris Anderson in the The Long Tail; everything that can be conceived will be.

Subsequently, in a world full of social media updates, emails, Instant Messages and an abundance of tools, capabilities and new concepts, the real skill or ‘secret sauce’, is balancing an openness to new ideas and education, whilst not allowing yourself to become distracted from your objective. This requires you to periodically review overarching goals and where possible remove or outsource waste. Personally if I manage to continuously do this I believe that I’ll achieve my intended benefits but as Netflix has found, I may also stumble across some unintended ones as well.